I am thrilled to announce an update to pet.xryptic.com, your go-to interactive hub for learning about Privacy-Enhancing Technologies (PETs). I have just launched two new demo applications designed to demystify Trusted Execution Environments (TEEs) and Differential Privacy (DP).

These new additions allow you to get hands-on with these powerful technologies, moving beyond theory to see how they work in practice. Whether you’re a student, a researcher, or simply a privacy enthusiast, these interactive tools will help you grasp the fundamental concepts behind these critical PETs. Let’s dive into what’s new!

TEE: The Tale of Trust

The first new demo, explores the fascinating world of TEEs. A TEE is like a secure, isolated vault within a processor, guaranteeing that the code and data loaded inside are protected with respect to confidentiality and integrity.

To help you understand this concept, we’ve brought to life “The Tale of Trust.” Imagine a scenario with three characters:

- General Gallant: A high-ranking military official with sensitive trade secret data. He’s naturally suspicious and distrustful of others.

- Engineer Clever: A brilliant algorithm developer who has created a powerful model to analyze data, but he needs access to the General’s data to improve it.

- Professor Trust: The wise intermediary who proposes a solution that satisfies both parties.

The problem is simple: The General doesn’t trust the Engineer with his raw data, and the Engineer doesn’t want to give away his intellectual property (the algorithm) to the General. How can they collaborate?

Professor Trust introduces them to the concept of a TEE. He explains that they can create a “secure enclave” – a digital vault that neither the General nor the Engineer can peek into while it’s running. The General puts his data in, the Engineer puts his code in, and the TEE securely executes the analysis. The General gets the results without revealing his data, and the Engineer’s algorithm remains protected.

Head over to the TEE demo to play through this scenario and see how a TEE facilitates secure collaboration between distrusting parties!

DP: The Blurry Photo

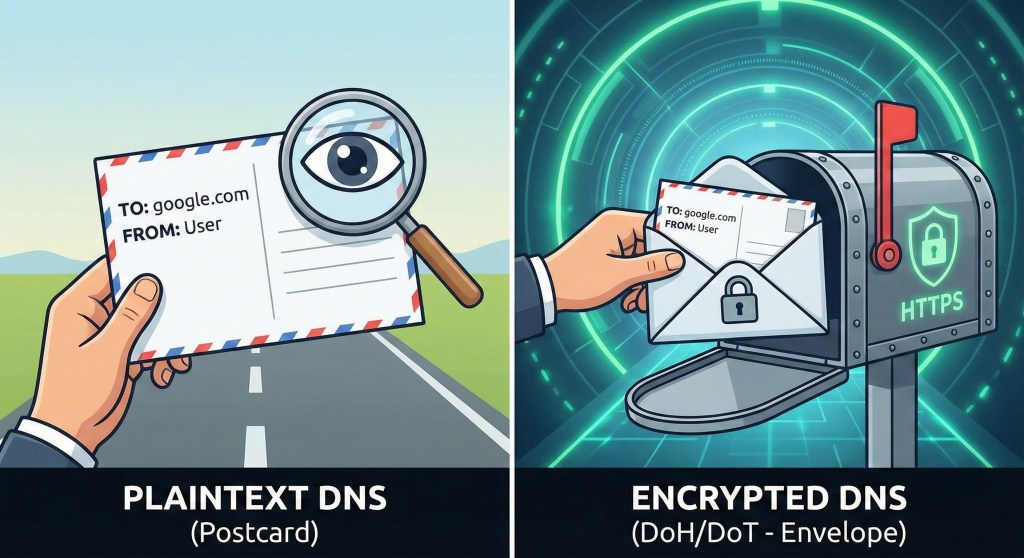

The second new demo, is all about DP. DP is a powerful mathematical framework for protecting individual privacy when analysing datasets. It allows you to gain insights from a group’s data without being able to identify any specific individual within that group.

To explain this, I use “The Blurry Photo” analogy. Imagine you have a high-resolution photo of a crowd of people. In this original state, you can clearly identify a person’s face. This represents a dataset with no privacy protection – anyone with access can see the raw, individual data points.

Now, imagine you want to share the general characteristics of the crowd (e.g., how many people are wearing hats, the sex distribution) without revealing the identity of any single person. You can apply a “blur” or add random “noise” to the photo. The more noise you add, the blurrier the photo becomes. You can still see the overall shape of the crowd and general trends, but individual faces become unrecognisable.

This “blur” is the essence of DP. By carefully adding a calculated amount of random noise to query results, you can provide useful aggregate information while making it statistically impossible to determine if any specific individual’s data was included in the analysis.

Visit the DP demo to experiment with the “Blurry Photo” concept, adjust the privacy budget, and more! Explore and see how it affects both privacy and data utility!

Leave a comment